10 Consumer Panelists Who Can Derail Your Market Research Surveys

TLDR: Your data quality will suffer if you aren’t being critical of your audience and their behavior.

- Panels hire professional survey takers instead of reaching real consumers.

- Survey companies may have updated their own methodology–but the subcontractors and marketplaces they use may not.

- Many professional survey takers try to game the system, resulting in low data quality. We’ll show you 10 types to watch out for.

These days, you can buy just about anything online. From clothes to furniture to even a babysitter for your kids, there are few things you can’t get with a smartphone and a working credit card.

There are some exceptions, however. Some products just don’t sell well online.

The most common example is cars. No one wants to buy a car without a test drive and a look under the hood.

The reason for this is simple: it is a big, expensive, complex product. A lot can go wrong and, the truth of the matter is, a lot of car dealers try to game the system.

In short, smart consumers always kick the tires and look under the hood before they drive off the lot.

What does this have to do with consumer research?

Survey companies have worked tirelessly to find survey takers ready and willing to weigh in on your product or service. And they got really good at selling it.

But is this data really any good? Survey panel participation has been on the decline, and now traditional companies have to create a panel from wherever they can: subcontractors, marketplaces, or worse.

This means there’s not a lot of transparency surrounding these survey respondents, or what motivates them to respond in the first place. And with a limited pool of panelists available to take surveys, these companies will resort to drastic measures to get the respondents they need.

So what? What do I care, so long as they can take my survey?

Unfortunately, not all survey responses are created equal when it comes to market research. Some distribution methods may be spammy or pulled from web ads. Some respondents may be bots or faked accounts. You just don’t know.

At Pollfish, we avoid panels altogether. Instead, we source our own audience of real consumers by partnering with app publishers, using these apps for randomized, yet targeted, survey distribution.

Using this survey methodology means we never worry about having enough respondents or reaching the right ones.

Since we have over half a billion people in our network, we can be pickier about our data quality. That means we’ve removed any responses that show inconsistency, too much consistency, bot-like behavior, or are simply from respondents who don’t match your survey’s targeting criteria.

We proudly admit that our cutting-edge AI and Machine Learning algorithms throw out 30% of our survey responses. This keeps our data quality high and our audience data as transparent as possible..

You heard that right–30%. More on that here.

30% seems like a lot. Are there really that many bad people in the world?

Still not convinced? You might be surprised to know that there are 10 common problem survey takers to watch out for, and more types are popping up all the time. We’ll show you how to spot them and tell you why should you eradicate them from your survey once and for all.

Let’s Get Real.

Professionals: Survey panel companies pay respondents to take surveys. The amount per survey is often low, so professionals have to take a ton of surveys every day to make money. This leads to all sorts of negative behavior that results in low-quality responses.

Rule-Breakers: As they are speeding through questions, Rule-Breakers will often skip key instructions, filling in answers incorrectly or offering incomplete responses. It can be hard to know if these users have done this on purpose, or if they’ve just been complacent, but either way: their data isn’t accurate so it’s best to just filter them out.

Speeders: Much like rule-breakers, speeders are moving far too fast to complete a survey accurately. But if you aren’t tracking for this, how would you know? Fortunately, Pollfish uses AI and Machine Learning to estimate and aggregate response times for each question. If respondents fall below a certain threshold, we toss them out.

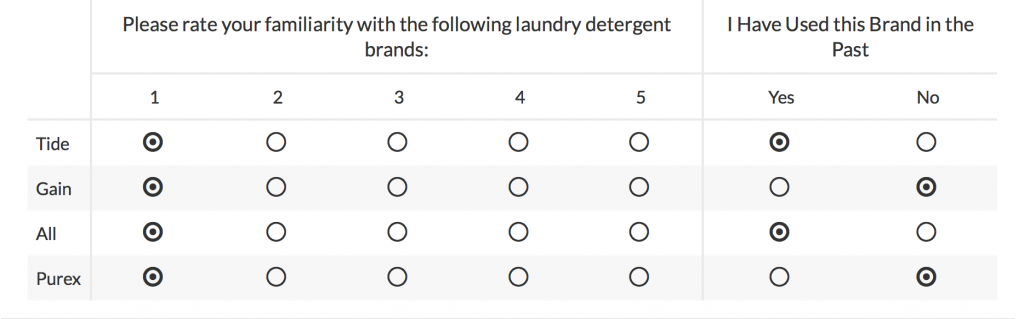

Straight-Liners: Straight-liners make it really easy to tell they are not being straight with us (see what we did there?). These respondents will answer all multiple-choice questions exactly the same way all the way down, creating a straight line and an easily identified response pattern to be removed.

Near-Straight-Liners: Some survey takers are wary that the panel they sit on has certain rules around straight-lining. To avoid running afoul of these rules, near-straight-liners will answer all but 1 or 2 questions in a straight line. Since we use real respondents with no incentive to exhibit this behavior, we don’t encounter it much, but we still have our Machine Learning algorithms in place to spot these tricks and toss responses we believe to be fake.

Alternators: Just like straight-liners or near-straight-liners, alternators will answer in familiar patterns (think A, B, C, D, C, B, A) all the way down the sheet. Luckily, these patterns also get caught by our AI and Machine Learning algorithms as low-quality responses and are tossed out with the rest.

Bots: Because taking surveys is an easy way to make some quick cash, some intrepid hustlers with computer savvy will create bots to take surveys for them. This is where old-fashioned methodologies like River sampling come up short, allowing anonymous users to click from web ads to take surveys without vetting them. River sampling has been viewed as a flawed methodology for a while now, especially because it is so easily exploited by bots. But while most survey panels don’t practice these debunked methodologies directly, they buy surveys from sample providers who likely still do. So beware, as without proper vetting, you may be surveying bots.

Unfortunately, not all survey responses are created equal. Some distribution methods may be spammy or pulled from web ads. Some respondents may be bots or faked accounts. You just don’t know.

Fake Accounts: Just like bots, some survey takers will create fake accounts, allowing them to take the same survey multiple times. While most survey panels check IP addresses to protect against this, these protections are easily circumvented. Since our surveys are sent to real people organically through apps, our respondents won’t receive the same survey more than once and there is no way for them to repeat or restart a survey once they’ve gotten started.

Block Voters: This type of respondent is not so much fraudulent as just generally harmful to a survey’s credibility. Block voters usually come when, for example, 5 bands are competing to win a contest. One band may do a better job of getting the word out, incentivizing support or even flooding the site so opponents can’t vote. Block voting can be prevented by things like only allowing users to vote once, blocking multiple votes from the same IP address, or distributing surveys, as Pollfish does, only to real, active users.

Biased Respondents: This last one is the toughest to safeguard against. Market researchers are familiar with how the bias of the crowd can impact responses. However, some survey sites will have places where survey takers can discuss answers, post their responses elsewhere and more. Professional survey takers, speeders and more will use these forums and answers to quickly answer surveys without adding their own, natural feedback. The internet is a big place and you, as a researcher, cannot easily protect against this. The only way to do it is to avoid survey panels altogether.

Find out how Pollfish measures up against the top consumer panel providers by comparing us to Google Surveys, Survey Monkey or Survata.

Do you want to distribute your survey? Pollfish offers you access to millions of targeted consumers to get survey responses from $1 per complete. Launch your survey today.

Global GSK Shingles Survey Insights

Original Insights,The Pollfish Blog

February 24, 2024

Shingles misconceptions: new global survey commissioned and funded by GSK highlights widespread…

B2B Sales Emails: Are they Effective or a Nuisance?

Original Insights,The Pollfish Blog

September 6, 2022

Are B2B sales emails a thorn in your side? Do they drive you crazy? Virtually all white-collar…